SmartLights

Motion activated lighting with solar charged battery power

Here's the problem space with the first application. Relatively high powered LED light strips that consume about 13 watts will be being powered by a 7Ah sealed lead acid battery and the available power for recharging the battery is from a 2 1/2 watt solar panel. Figuring roughly 5 watt hours out of the panel on a given day (and this might be quite optimistic), there is only enough power to run the LEDs at full intensity for 46 minutes every 24 hours. This may be plenty, or might fall way short, so if the LED lights aren't smart they may be useless.[Note: the Coleman panel planned for this project was marketed as a "2.5 watt" panel but more recent information suggests it may be far less.]

The basic strategy will be to pay attention to outside ambient light/dark cycles and running average usage to pick a default LED light level (set with pulse width modulated switching of power to the LEDs), then wait a few seconds after motion is detected near the lighted area (i.e. the time it takes to walk close), and turn the lights on. The PIR sensor will be hacked to allow as much precision as possible with the determination of "motion vs no motion". After some number of seconds of no motion the lights will be turned off. Alternatively, depending on the state of the battery, the lights will be dimmed before being turned off completely.

If none of this is enough the combination of low light and brief "on period" may have to be with a "tap here to get more light" sign or the like. The sign would of course be illuminated reliably. That is, this would be similar to a cell phone with very low battery, turning it's display off aggressively.

The microcontroller will log some behavior in EEPROM to offer clues about actual performance and feed back "better" default light levels (i.e. throttle the PWM so the lights might, for example, be just bright enough to be usable unless the battery is well charged). One open question is whether usable information can be logged without sense of time beyond the CPU clock. If this system will "just work", a clock will be avoided, otherwise something like a Maxim DS3231 will be added. (The extreme ambient temperature cycles expected would make a cheaper clock like a DS1307 a waste of time). It seems like the simple photocell monitoring outside light cycles should be enough to support a gradual, "good enough" calibration of "cpu cycles per day".

Speaking of temperature cycles, the solar charger is itself quite smart, and one of the things it has to get right is the battery float charge voltage. It will use a thermistor mounted to one of the battery terminals for this, to avoid charging errors that would tend to shorten battery life. Also, the upper limit for charging is 50 centigrade. If the battery and charger regularly go over 50C a small fan may be required. The microcontroller can trivially run the fan as needed to lower temperature as long as circulation is possible.

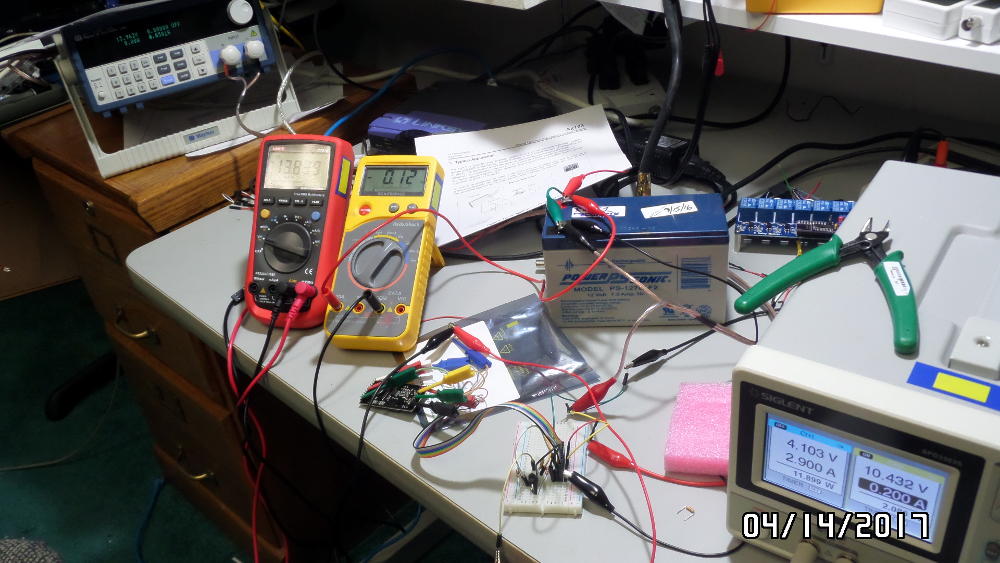

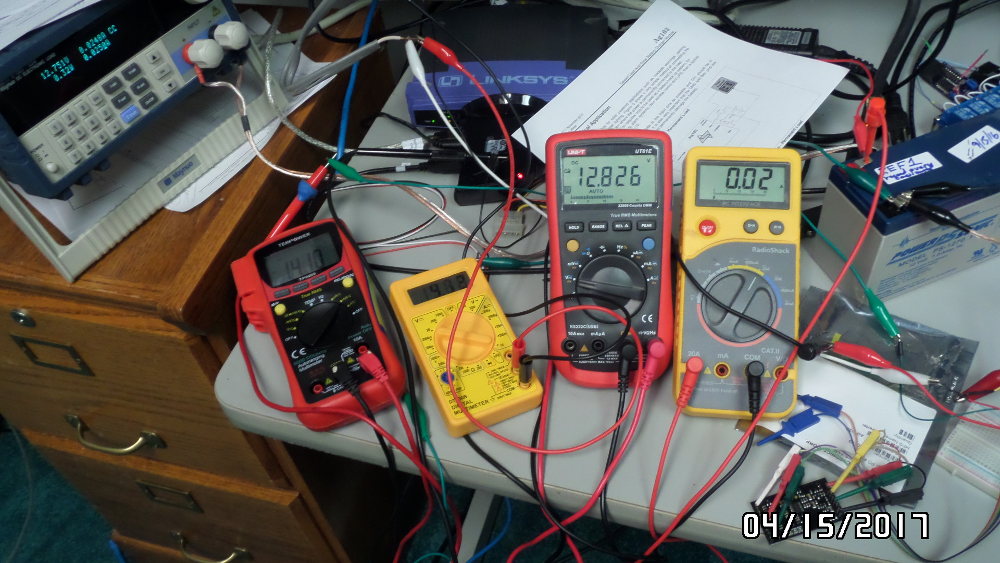

Initial charger circuit testing (Silvertel ag103 MPPT charger is small board near corner of yellow meter

First impressions of the Silvertel ag103 charger are good. The PCB is well made, choice of MCU is great (ST STM32 series 32 bit ARM in a nice TSSOP20 package). The whole thing is on a roughly 30x50mm board with two male headers the right length to solder onto a main board. The current plan is to neatly solder to the header pins while confirming this charger is going to get the job done and then move it to a permanent home on a PCB that has the rest of the circuitry.

Using a lab supply to pretend to be a solar panel doesn't cut it, because the charger board is obviously changing it's load presented to the supply and the supply reaction is surprising. But there must be some sweet policy in the firmware, as the "hunting" from one voltage/current demand on the supply to the next eventually stops. It would make for some fascinating graphs to have the supply controlled by a simple Python program while logging the voltage and current consumed.

But three low voltage panels in series are being mounted on a board to live for a while on the roof where the system is being developed, This will be three two watt panels with a combined open circuit voltage similar to that of the target panel (a Coleman 2 1/2 watt panel). An exact match with a second Coleman panel seems desirable, but might be made moot if this initial setup works well enough to get the target application going quickly.

Back to the PCB board, as a carrier for the charger this will necessarily be relatively large in relation to the mount of stuff on it. That will be a refreshing change after a lot of very dense boards that attempted to squeeze very penny out of the cost involved. But an alternative prototype board vendor is available for this design (PCBs.io), and the first rough cut comes out to about $12.50 for four copies of the board, which seems excellent. On the other hand, turn around with PCBs.io is almost three weeks, while oshpark.com is two weeks or less in return for a cost of $16 for three boards.

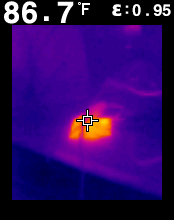

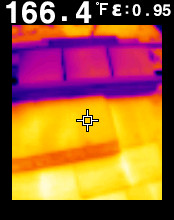

Thermal image of charger board passing a couple watts to load and battery while battery supplies an eight watt load. About 20F rise over ambient temperature.

Breadboard charger testing

Here's the charger being driven by three little five volt panels in series (nearing sunset, but sun long since behind tall trees). From left to right is the Maynuo electronic load asking for a whopping 25mA, the red meter showing volts out of the panels, little yellow showing mA out of panels, propped up red showing battery voltage, and rightmost yellow meter showing amps in/out of the battery. Tomorrow about 11am when the sun gets fully over the trees there should be more action.

Test solar panels

Three 5V, 2 watt panels in series. The MPPT charger settles to a load on the panels around 15 volts in full sun, but current out of the panels was only 140mA the first go around. That's roughly two watts.

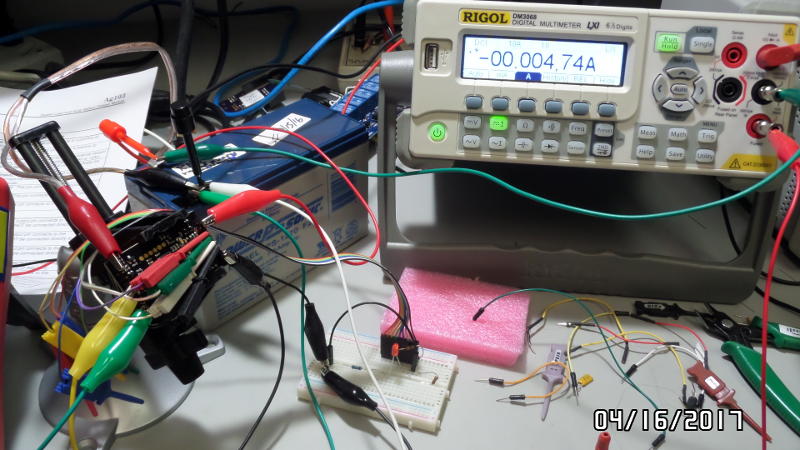

Rigol voltage/current measurement

Here's a Rigol DMM measuring voltage (and current, alternating) and putting it on the LAN where a simple python program can pick it up and log it. This is after sunset and the charger board is drawing just a few mA out of the battery. The idle current is only 3-5mA in this case, beating the datasheet spec by a factor of two. The MCU should add very little to this, leaving the PIR's idle current the remaining concern. If that's very high another FET can be used to disconnect it during daylight hours.

After gathering more data (described below), the charger idle current will be a real factor. The charger will consume 1.5 watt hours during a given "night" (i.e. the whole stretch of time the panel puts out no usable energy). It's clear now that the MCU has to be disconnected entirely when the ambient light sensor indicates there is nothing to be gotten from the solar panel. The good news is that 1.5 watt hours translates to six minutes of full intensity light for the first target application.

It should be noted here that unlike the charger (that uses DC-DC conversion with about 85% efficiency for getting it's power), the MCU and PIR (and any status LEDs, measurement resistor dividers, etc) need power at much lower voltage than the LED lights. A simple linear regulator to provide five volts for the MCU et al translates to a "seven volt dummy load". That is, if the PIR and MCU were to consume 10 mA while active then 70mW will be converted into heat by the regulator. An alternative supply circuit that is 85-95% efficient at low current levels is available. The honest truth at the moment that it is being avoided because the regulator IC involved is only available in a QFN surface mount package, and this package is not usable by a large majority of enthusiasts. A power supply daughter board solution that allows for the two alternatives may be the best solution, although that approach creates other issues.

And this raises another basic issue, which is the integrity of electrical connections in this system. The first application involves a relatively unprotected environment that may suffer extreme heating/cooling cycles and condensing humidity. The plan is to put the electronics into an air tight box with one weatherproof connector for battery, panel, PIR, ambient light sensors and controlled LED lights. The expectation is that the extremely delicate charger and control PCBs, and presumably any daughter board for DC to DC conversion, will stay in dry air. That leaves temperature rise within the enclosure as a remaining issue.

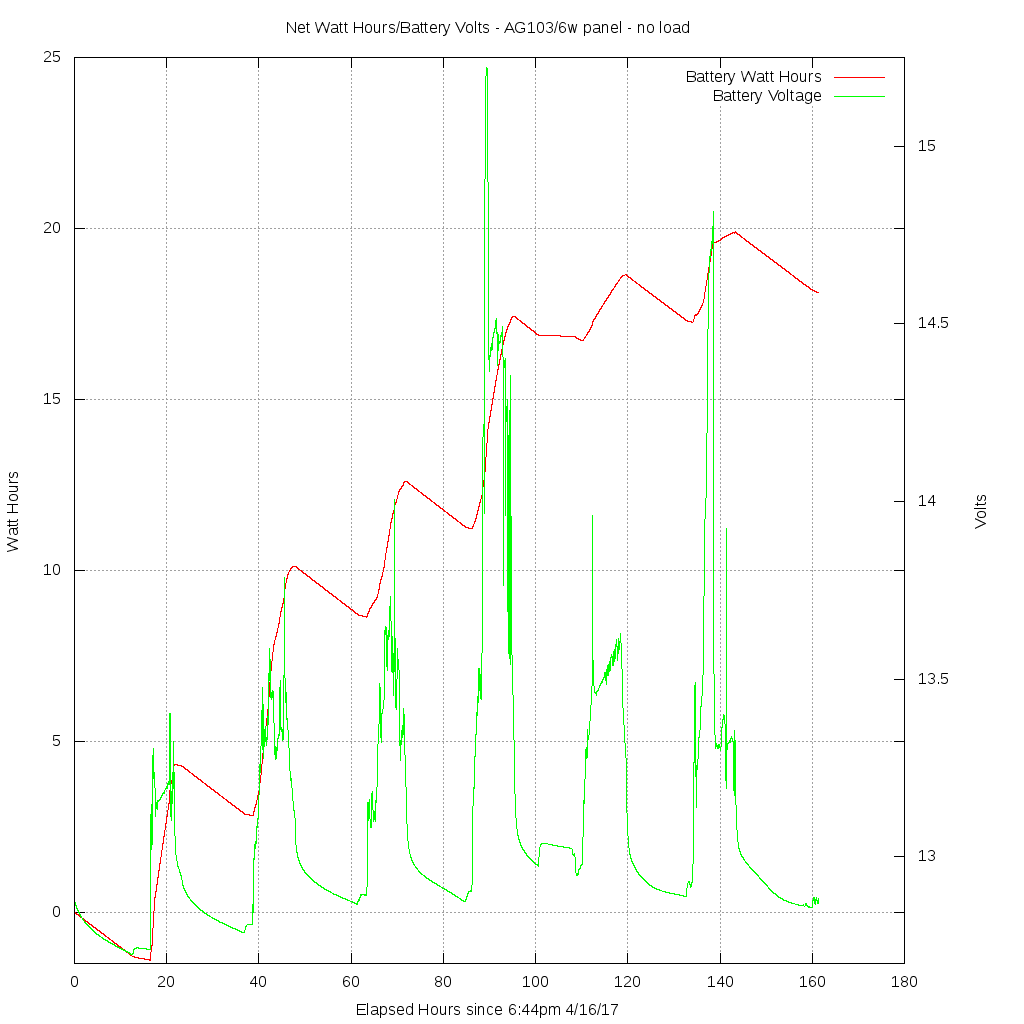

AG103 MPPT charger and test panel performance

The net watt hours into (positive) or out of (negative) the battery and its voltage as it is charged and discharged is graphed with the charger connected to a 7aH sealed lead acid battery and six watt test panel. Clouds have affected power both days. The panel set is in a heavily wooded area and only gets direct sun a few hours a day in the best case. There is no load attached yet, so when there is no power from the panels the only draw is the charger's idle current, which has been delightfully well below it's 10mA specification. The charger's idle current is mysterious, however, spending long periods around 1.7mA, long periods at 8mA, while other times it is jumping up and down as would be expected if the MCU is suspending itself and periodically waking to check whether it's environment has changed. However (a big however), the big electrolytic cap specified to go across the panel output wasn't properly attached until about hour 40 and so it will be interesting if this makes for more stable idle (dark) conditions leading the charger to use very little current.

It's also obvious now that the simple resistive photo-detector that will tell if any extra lighting is needed or not would be useful as additional data to make sense of the charging process. The big question about this charger is whether it is spending any significant time "hunting" for the maximum power point from the panel, and in so doing, missing out on proper power from the panel.

But the latest data from a "wall to wall blue sky" day after so much overcast time this week reveals a new issue, which is way WAY lower charging current than would be expected at this point. The measurement system is precise and its accounting of the amp hours going into and out of the battery should be close to reality (samples are every 60 seconds). But from hours 89 to 95 there was some very wonky behavior observed. First, the roughly 130mA of current from the panels pushed the battery voltage to 15.2, which is way past the limit spec'd for the charger (it should not put out more than 14.6 volts under any circumstances). Then the charger obviously dropped to a constant voltage output of 14.4 volts and the current into the battery dropped to around 50mA. This was too low. There was the same (roughly 180mA) available from the panels, but the charger limited current to 50-odd mA at 14.4 volts after plenty of time for the battery to readjust to the lower voltage. The result was that the remainder of this day was spent putting way too little current into the battery. The testing was started with the battery being able to accept at least 3Ah. It's only accepted a net one and a quarter Ah so far. Something's wrong with this picture. It will be interesting to see what happens in the morning when the charger turns output back on. It ought to put as much current as is available into the battery. If it continues to throttle current to 50mA that's going to be a deal breaker for this charger unless something has caused a gross accounting error and the battery is actually close to fully charged.

Delving into what's actually going on with current flows depends on "counting coulombs" with the battery to have a proper option about the energy really going into the battery. An alternative to an expensive DMM may be needed for in situ measurements. Just by chance collaboration with an area engineer is resulting in the perfect tool for this: an LTC2944 "battery fuel gauge" the MCU can interrogate via I2C. One (for battery) or two (battery and panel) would allow very precise measurements and allow determining just how efficient the system is. This, of course, has nothing at all to do with the interests of users of this lighting system, but everything to do with the interests of the system designer who might steer folks toward or away from the AG103 charger!

But this forces a related issue, which is a choice of MCU. For the simplest imaginable implementation of this project something as simple as an ATTiny85 would be adequate to implement very simple policies to run the lights at X brightness for Y seconds after motion is detected and the battery is deemed OK to draw from. However, this project is aimed at delivering a very small number of prototypes into just a few application scenarios (the "Little Library" at Durham Scrap Exchange being the one that will be published here). It honestly doesn't matter in terms of cost whether a $1 or $4 MCU is used, but that does make a big difference in the range of capabilities that can be supported. The design defined by the schematic below is heavily subscribing the pins of an ATTiny84A and there has already been nervousness about capacity issues. Together with the COLLAPSE of the price of an ATMega328p chip (now $2!), that chip is going to be popped into the design, at least until the build environment for a more interesting chip like an STM32F030F4P6 is prepared.

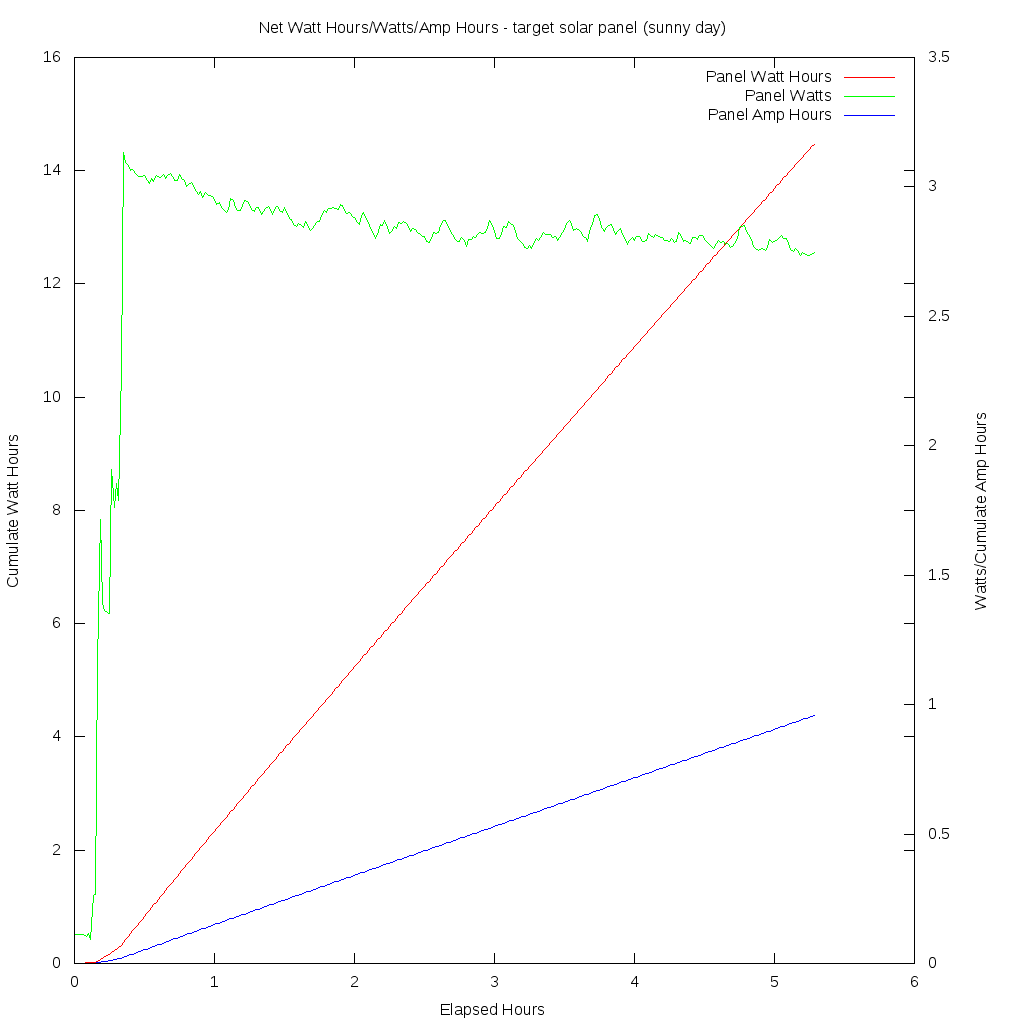

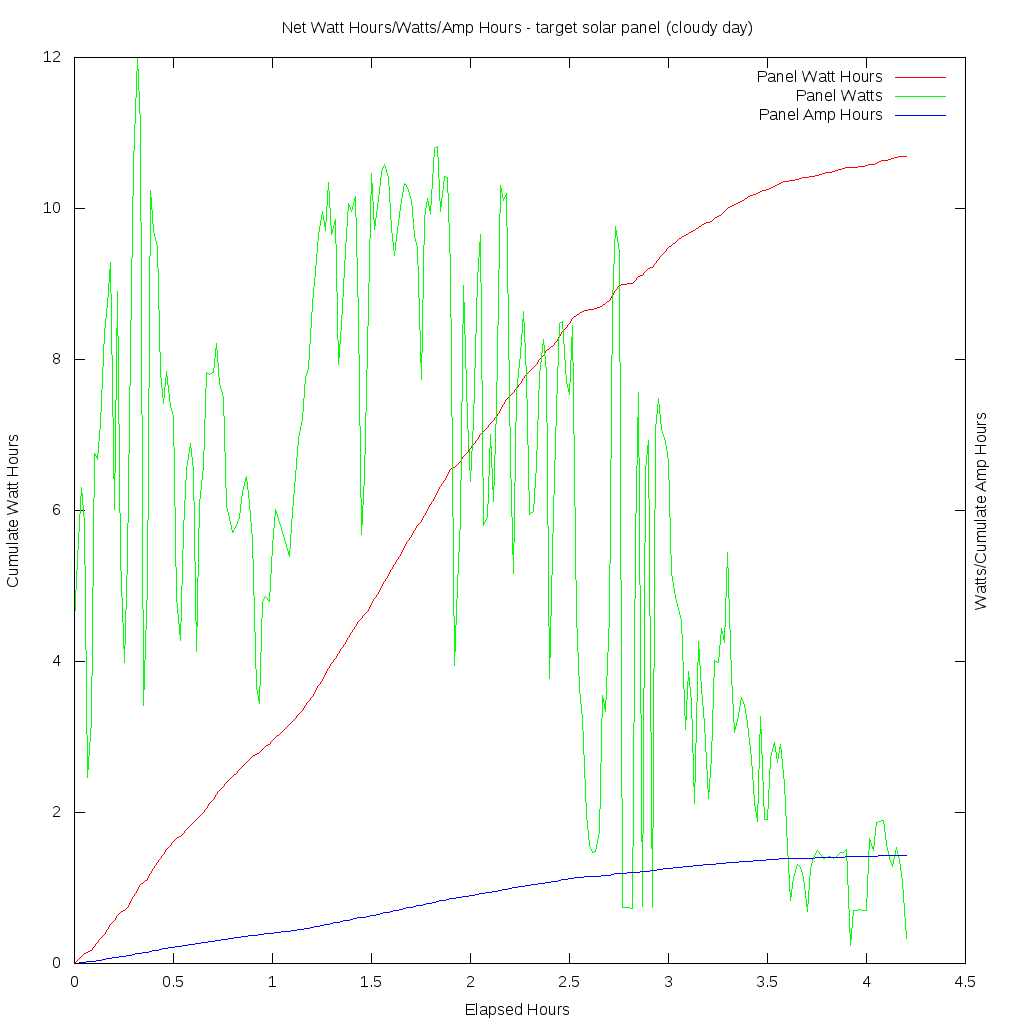

Target solar panel performance

This is a graph of the power drawn from the new target solar panel on a cloudy day. The panel is rated at 10 watts but has only been seen to put out five watts in full sun so far.

This is a graph of the power drawn from the new target solar panel on a cloudy day. The panel is rated at 10 watts but has only been seen to put out five watts in full sun so far.

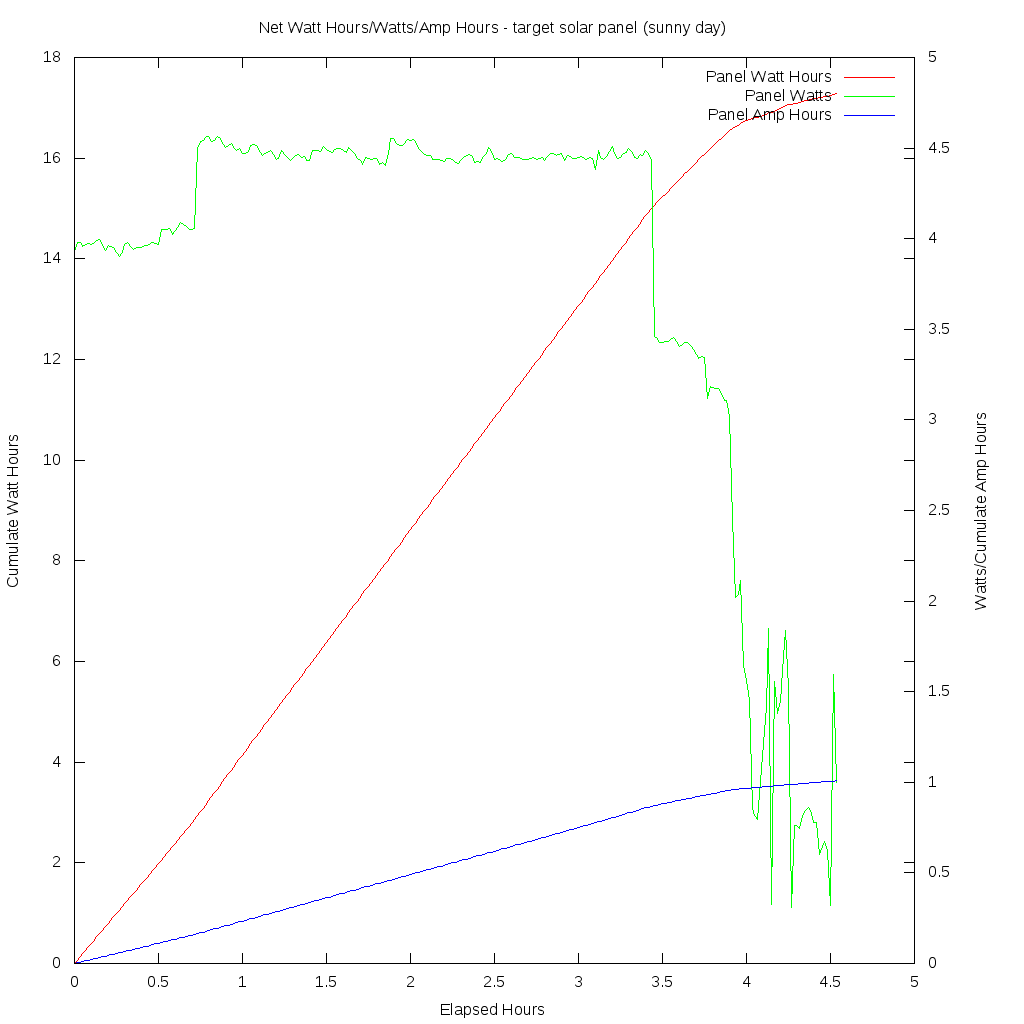

This graph shows the same panel on a sunny day. Output becomes erratic as the sun is blocked intermittently by a tall pine tree. The step changes in power output are disturbing. The 1/2 watt increase in output at the 3/4 hour mark and more than one watt drop at the 3 1/2 hour mark are hard to understand. It's as if the controller's policy is to establish a new maximum power point much less frequently than the actual rate of change as the panel reaches and leaves peak irradiance. The algorithm for MPPT doesn't seem to be very good: certainly not as good as the "perturb and observe" strategy described in this Microchip white paper. But again, it's possible the controller's policy is simply not applied frequently enough. Still the idea of ignoring a good fraction of a watt hour because inappropriate conversion settings are held for long periods of time is annoying.

This graph shows the same panel on a sunny day. Output becomes erratic as the sun is blocked intermittently by a tall pine tree. The step changes in power output are disturbing. The 1/2 watt increase in output at the 3/4 hour mark and more than one watt drop at the 3 1/2 hour mark are hard to understand. It's as if the controller's policy is to establish a new maximum power point much less frequently than the actual rate of change as the panel reaches and leaves peak irradiance. The algorithm for MPPT doesn't seem to be very good: certainly not as good as the "perturb and observe" strategy described in this Microchip white paper. But again, it's possible the controller's policy is simply not applied frequently enough. Still the idea of ignoring a good fraction of a watt hour because inappropriate conversion settings are held for long periods of time is annoying.

Test panel power output droop with temperature rise

This interesting graph of the six watt test panel output at the start of a day shows that with the very steady full sun of that day the power output falls as the panel temperature goes up. The panel (actually three 5v panels in series, as mentioned above) is on an a wooden mount resting on an asphalt shingle roof. Both roof and panel become very hot in full sun as the IR images below demonstrate.

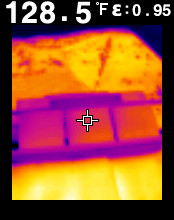

DRAFT (UNTESTED!) Schematic of management circuit

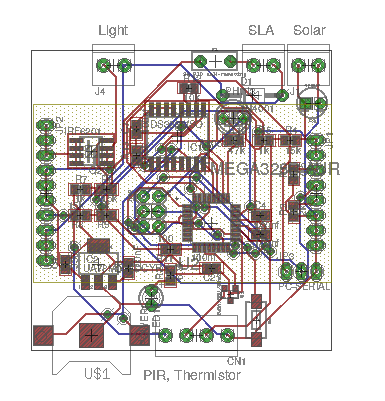

DRAFT (INCOMPLETE, UNTESTED) Initial PCB layout

This board is far from complete. The high current paths aren't routed with the proper trace widths, the silk screen labels are a total mess, etc. But the final board is expected to be similar to this after the changes listed below. Just as soon as the charger/battery/panel breadboard setup can be confirmed for basic operation PCBs will be ordered. The initial goal was April 19th, but this is slipping with changes of plans.

Changes in the pipes:

- Power via small DC to DC daughterboard.

- Plain solder pad connections to PCB assuming transition to weather proof connector with PCB enclosure

- MCU (e.g. Arduino Mini) as daughterboard